The Arkansas High Performance Computing Center (AHPCC) provides expertise, high performance

computing hardware, storage, support services, and training to enable computationally-intensive

and data-intensive research. The AHPCC is available to faculty, staff and students

at all of the Arkansas public universities, and to their collaborators inside and

outside of the state. Most AHPCC services are provided free of charge to eligible

researchers. Priority condo access is also available. AHPCC is a University of Arkansas Core Facility .

The Arkansas High Performance Computing Center (AHPCC) provides expertise, high performance

computing hardware, storage, support services, and training to enable computationally-intensive

and data-intensive research. The AHPCC is available to faculty, staff and students

at all of the Arkansas public universities, and to their collaborators inside and

outside of the state. Most AHPCC services are provided free of charge to eligible

researchers. Priority condo access is also available. AHPCC is a University of Arkansas Core Facility .

AHPCC @ UA

Computational Resources

A quick look at system specifications for Pinnacle, Trestles, and Razor plus other external resources available to researchers

Research Projects

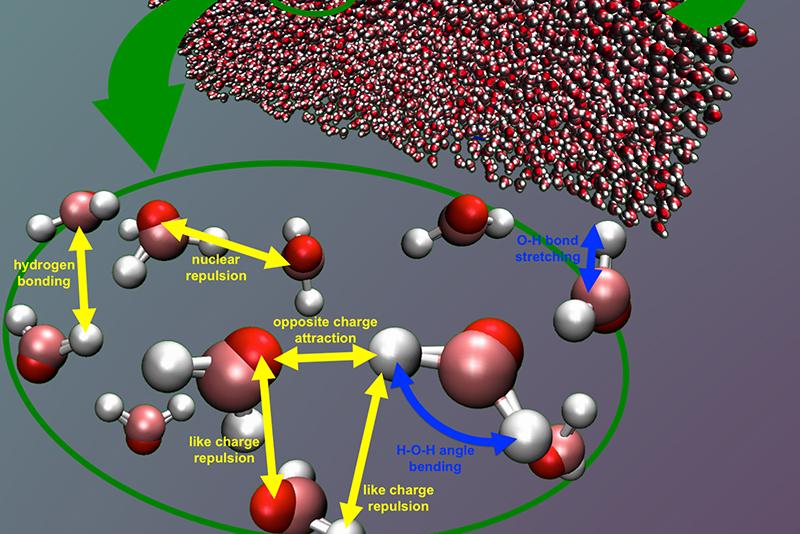

Learn how high performance computing is used to answer research questions across different disciplines

Learning Resources

Request a user account, check system stats, and visit the HPC WIki for "How-to's."

Contact Us

New user or existing users? Contact HPC staff with your questions!